Everything you need to Know about Supervised Learning

Supervised Learning is the Machine Learning

task of learning a function that maps an input to an output based on example

input-output pairs. It infers a function from labeled training data consisting

of a set of training examples.

The evolution of Artificial Intelligence has changed

the entire world in terms of technology. It’s advancement are quicker than we

predicted with such exponential growth in Artificial Intelligence, Machine

Learning is becoming the most trending field of this century, it is starting to

redefine the way we live and the way we see the world.

So before diving into the Supervised Learning

directly, let’s briefly explore the concept of Machine Learning.

What is Machine Learning?

- Machine Learning is a method of data analysis that

automates analytical model building. Using algorithms that iteratively learn

from data, Machine Learning allows computers to find hidden insights without

being explicitly programmed.

We can also say that Machine Learning is a subset of

Artificial Intelligence that focuses on getting Machines to make decisions by

feeding them data.

So now we came to know what is Machine Learning.

What are the Types of Machine Learning?

- Basically

Machine Learning is divided into three parts.

What is Unsupervised Learning?

- Unsupervised Learning is a type of Machine Learning that looks for

previously undetected patterns in a dataset with no pre-existing labels and

minimum of human supervision. The Unsupervised Learning algorithms are not

provided any “Answers” to learn from; it must make sense of the data just by observations.

Go here and check out...Top 7 Essential Points about Unsupervised

Learning Every Artificial Intelligence developer should know.

What is Reinforcement Learning?

- Reinforcement Learning is a part of Machine Learning where an Agent

learns to behave in an environment by performing actions and seeing the results.

It basically performs an action and it either gets rewarded on the

actions or it gets punishments. Reinforcement Learning is all about taking appropriate action in order to maximize the rewards in a particular situation.

Reinforcement Learning is all about an Agent who has been

put in an unknown environment and it is going to use hit and run method in

order to figure out the environment and come up with an appropriate outcome.

Now let’s discuss our today's topics which are Supervised

Learning.

Let’s have a look at our Agenda :

(i) What is Supervised Learning?

(ii) Supervised Learning Process

(iii) Supervised Learning and it’s types

(iv) Classification vs Regression

(v) Supervised Learning vs Unsupervised Learning

(vi) Supervised Learning Examples in Real Life

(vii) Advantages of Supervised Learning

(viii) Disadvantages of Supervised Learning

(ix) Supervised Learning Example in Python

(i) What is Supervised Learning?

- Supervised Learning model has sets of input variables (x) and an output variable (y). An algorithm identifies the mapping function between (x) and (y) variables. The relationship is y=f(x).

- Supervised Learning algorithms are trained using labeled examples, such as an input where the desired output is known. That means within our dataset we are going to have some historical features with historical labels.

- Supervised Learning algorithms are trained using labeled examples, such as an input where the desired output is known. That means within our dataset we are going to have some historical features with historical labels.

- Let’s say for example we are creating an E-mail spam detection system. Now we will provide information such as a segment of text which has a category label. So we take a bunch of previous E-mails and someone has already gone by those E-mails and classified them using the correct labels as spam or ham (Non-Spam). So there were some E-mails which are were classified as the spam or ham (Non-Spam) so the idea would be for the future text information such as future E-mails using the historical labeled data and Machine Learning algorithm which will learn using historical data in order to predict the new data whether it is a spam or ham.

Supervised Learning is commonly used in applications where

historical data predicts likely future events.

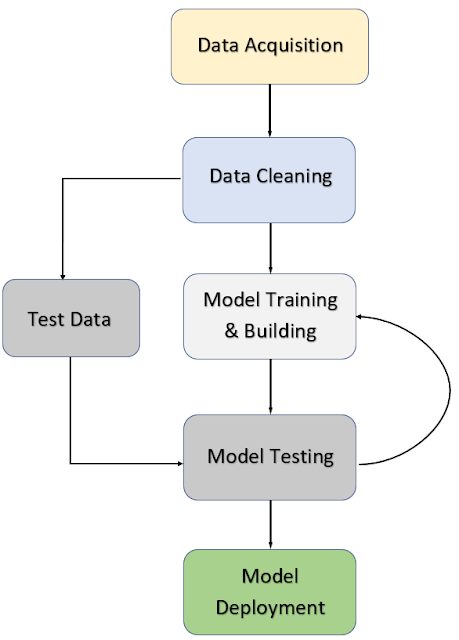

(ii) Supervised Learning Process in Machine Learning

So let’s go in and go through this step by step

*Get data and it actually depends on what domain you are working in

from where this data actually comes from. This data can come from your

customer's feedback, research or it would have been collected from some online

database, etc. So at some point the data has to be acquired.

*Once we actually acquire the data then we need to clean and format the data and often we do this using Pandas.

*Then we split the data into Training data and Testing data. What we do here is, we take some portion of our data like 30% to make it our test data, and then we will take the remaining data which is 70% as our training data. Now what we are going to do here is we are going to use that specific training set on our machine learning model in order to fit a model to that training data.

*Then we want to know how our model performs. So then we run that test data through the model and compare the model predictions to the actual correct label that the test data have because remember we actually know the correct label for the test data. So we can run that test data features through the model, get our models predictions and compare it to the correct answer and then we can evaluate the model and then maybe you want to go back based off that performance and adjust the model parameters such as decreasing the amount of test size to 20% or 25%.

*And once we are satisfied by this we can then deploy the model to the real world.

(iii) Supervised Learning and it’s types

As we know from earlier Supervised Learning algorithms are trained

using labeled examples, such as an input where the desired output is known.

Types of Supervised Learning :

Supervised Learning is divided into 2 types.

(i) Classification :

- Classification is a process

of categorizing a given set of data into classes, It can be performed on both

structured or unstructured data. The process starts with predicting the class

of given data points. The classes are often referred to as target, label, or

categories. The classification predictive modeling is a task of approximating

the mapping function from input variables to discrete output variables and the

main goal is to identify which class or category the new data will fall into.

To simplify this, Let’s look at some examples: Is that email spam or not, Does a patient is suffering from heart diseases or not, etc.

Classification problems can be solved with a numerous amount of

algorithms. Any algorithm you choose it actually depends on the situation. Here

are some of the popular classification algorithms.

*K-Nearest Neighbor

*Random Forest

(ii) Regression :

- Regression Analysis is a predictive modeling technique.

It estimates the relationship between a dependent variable we can also call it

as target & an independent variable which is also known

as a predictor.

Regression algorithm goal is to predict the continuous number such

as sales, price, scores, etc. The equation for basic linear regression can be

also written as:

y = m*x+c

Where;

y :

Dependent variable

x :

Independent variable

c :

y-Intercept

m :

Co-efficient

For simple regression

problems such as this, the model predictions are represented by the line of

best fit. For models using two independent variables, the plane will be used.

Finally, for a model using more than two independent variables, a hyperplane will

be used.

To simplify this, Let’s look at some examples: Predicting house

prices, predicting student scores, etc.

There are many different types of Regression Algorithms. The three

most common are listed down below :

*Polynomial Regression

(iv) Regression vs Classification

Let’s see the Difference between Regression and Classification.

(v) Supervised Learning vs Unsupervised Learning

Now Let’s see the difference between Supervised Learning and

Unsupervised Learning.

If you didn’t have knowledge of Unsupervised Learning don’t worry

just check out this article of ours.

(vi) Supervised Learning Examples in Real Life

So as we saw that there are 2 types of supervised learning, let’s

see their implementation in the real world.

(a) Real-world implementation of Supervised Learning with respect to Classification :

- Rating of a product or a movie.

- Fraud detection.

- Spam or not spam.

- Risk analysis.

(b) Real-world implementation of Supervised Learning with respect to Regression :

- House price prediction.

- The amount of Fraud.

- Probability of Risk.

- Detecting the increase or decrease of Crime Rates.

(vii) Advantages of Supervised Learning

* You can get

very specific about the definition of the classes, which means

that you can train the classifier in a way that has a perfect decision

boundary to distinguish different classes accurately.

* You can specifically determine how many classes you

want to have.

* After training, you don’t necessarily need to keep the

training examples in a memory. You can keep the decision boundary as a

mathematical formula and that would be enough for classifying future

inputs.

(viii) Disadvantages of Supervised Learning

* Your decision

boundary might be overtrained. This means that if your training

set is not including some examples that you want to have in a class when you

use those examples after training, you might not get the correct class label.

* When this an input which is not from any of the classes in

reality, then it might get a wrong class label after

classification.

* You have to select a lot of good examples from each class

while you are training the classifier. If you consider the classification of big

data that can be a real challenge.

* Training needs a lot of computation time, so do

the classification.

* You might need to use a

cloud and leave the training algorithm work overnight or nights before

obtaining a good decision boundary model.

(ix) Supervised Learning Example in Python

Let’s see Supervised Learning Examples where we will be using python. We will work on 2 examples, the first example will be related to Regression

and the second example will be related to the classification.

- Here we will be using Linear Regression to predict the house prices in Boston. If you want to know more about Linear Regression do check out this link and continue with this example.

Linear Learning

Q.) Predict Boston House Prices Using Python and Linear Regression

(a) Supervised Learning (Regression)

- Here we will be using Linear Regression to predict the house prices in Boston. If you want to know more about Linear Regression do check out this link and continue with this example.

Linear Learning

Q.) Predict Boston House Prices Using Python and Linear Regression

# Importing Essential Libraries

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

%matplotlib inline

import numpy as np

import matplotlib.pyplot as plt

%matplotlib inline

#Importing Data set

from sklearn.datasets import load_boston

bos_data = load_boston()

bos_data = load_boston()

# Initializing the dataframe

data = pd.DataFrame(bos_data.data)

data.head()

data.head()

Output:

# Adding the feature names to the dataframe

data.columns = bos_data.feature_names

data.head()

data.head()

Output:

CRIM per capita crime rate by town

ZN proportion of residential land zoned for lots over 25,000 sq.ft.

INDUS proportion of non-retail business acres per town

CHAS Charles River dummy variable (= 1 if tract bounds river; 0 otherwise)

NOX nitric oxides concentration (parts per 10 million)

RM average number of rooms per dwelling

AGE proportion of owner-occupied units built prior to 1940

DIS weighted distances to five Boston employment centers

RAD index of accessibility to radial highways

TAX full-value property-tax rate per 10,000usd

PTRATIO pupil-teacher ratio by town

B 1000(Bk - 0.63)^2 where Bk is the proportion of blacks by town

LSTAT % lower status of the population

# Checking the shape of the dataset

data.shape

Output:

(506, 13)

# Overall Description of the dataset

data.describe().transpose()

Output:

# Adding a target variable to the data frame

# Median value of owner-occupied homes in $1000s

data['PRICE'] = bos_data.target

# Finding out the correlation between the features

corr = data.corr()

corr.shape

corr.shape

Output:

(14, 14)

# Plotting the heatmap of correlation between features

plt.figure(figsize=(16,10))

sns.heatmap(corr, cbar=True, annot=True, cmap="BuPu")

plt.ylim(15,0)

sns.heatmap(corr, cbar=True, annot=True, cmap="BuPu")

plt.ylim(15,0)

Output:

# Splitting target variable and independent variables

X = data.drop(['PRICE'], axis = 1)

y = data['PRICE']

y = data['PRICE']

# Splitting to training and testing data

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X,y, test_size = 0.2)

X_train, X_test, y_train, y_test = train_test_split(X,y, test_size = 0.2)

# Import library for Linear Regression

# Create a Linear regressor

# Train the model using the training sets

from sklearn.linear_model import LinearRegression

model = LinearRegression())

model.fit(X_train, y_train)

model = LinearRegression())

model.fit(X_train, y_train)

Output:

LinearRegression(copy_X=True, fit_intercept=True, n_jobs=None, normalize=False)

# Value of y-intercept

model.intercept_

Output:

32.1816401311959

# Value of Co-efficient

model.coef_

Output:

array([-1.09214028e-01, 4.57894028e-02, 4.59321522e-02, 3.40092338e+00,

-1.79347006e+01, 4.21895547e+00, -8.53459799e-03, -1.43742353e+00,

3.09455894e-01, -1.23217028e-02, -8.44931385e-01, 8.04204265e-03,

-4.88716167e-01])

# Model prediction on train data

y_pred = model.predict(X_test)

# Check the model performance and accuracy using Mean Squared Error

from sklearn.metrics import mean_squared_error

print(mean_squared_error(y_test,y_pred))

print(mean_squared_error(y_test,y_pred))

Output:

23.9922269542709

So this was the example of predicting house prices in Boston using Linear Regression which comes under the concept of Regression.

(b) Supervised Learning (Classification)

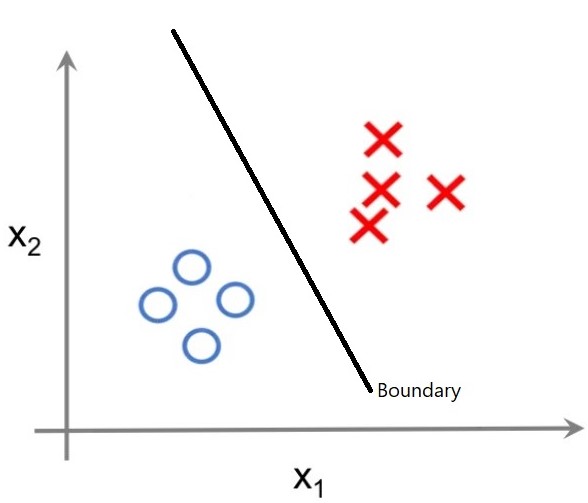

- A support vector machine is a popular classification algorithm. Basically the way support vector machine works is it draws a hyperplane in n dimension space such that it maximizes the margin between classification groups.

Support Vector Machine

Q.) SVM on Breast Cancer Dataset

# Importing Essential Data Set

import pandas as pd

import numpy as np

import seaborn as sns

import matplotlib.pyplot as plt

%matplotlib inline

import numpy as np

import seaborn as sns

import matplotlib.pyplot as plt

%matplotlib inline

# Import Dataset

from sklearn import datasets

cancer_data = datasets.load_breast_cancer()

cancer_data = datasets.load_breast_cancer()

# Creating Train & Test Data

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(cancer_data.data, cancer_data.target, test_size=0.2)

X_train, X_test, y_train, y_test = train_test_split(cancer_data.data, cancer_data.target, test_size=0.2)

# Import Support Vector Machine

from sklearn import svm

cls = svm.SVC(kernel='linear')

cls = svm.SVC(kernel='linear')

# Training the model

cls.fit(X_train,y_train)

# Predict the model

from sklearn import metrics

pred = cls.predict(X_test)

print("Accuracy :", metrics.accuracy_score(y_test,y_pred = pred))

pred = cls.predict(X_test)

print("Accuracy :", metrics.accuracy_score(y_test,y_pred = pred))

Output:

Accuracy : 0.9473684210526315

# Precision score

print("Precision :", metrics.precision_score(y_test,pred))

Output:

Precision : 0.9506172839506173

# Recall score

print("Recall :", metrics.recall_score(y_test,pred))

Output:

Recall : 0.9746835443037974

# Classification Report

from sklearn.metrics import classification_report

print("Classification Report :",classification_report(y_test,y_pred=pred))

print("Classification Report :",classification_report(y_test,y_pred=pred))

AI is a powerful tool, but it requires strategic implementation to truly add value. That’s why an Artificial Intelligence (AI) Strategy Course is crucial for decision-makers. Whether you're managing a startup or a government department, understanding how to leverage AI can lead to smarter investments, increased efficiency, and more impactful innovation.

ReplyDelete